Digital Twin Advocate: AI companion research for brain injury rehabilitation

Engagement type: Research-informed speculative design, Graduate Certificate in Digital Learning and Teaching, Victoria University My role: Sole researcher and designer Completed: 2025 Results: High Distinction across all three assessments (100/100, 97.5/100, 91/100)

At a glance

After a traumatic accident, many patients face a sudden silence that feels absolute. Words form in the mind but cannot travel to the mouth. Families and clinicians try to interpret gestures. But what is lost most painfully is not just communication. It is recognition.

This project asked a research question with direct design implications: what would it take for people to trust an AI companion designed to give voice back to a person who has lost the ability to speak?

The Digital Twin Advocate (DTA) is a speculative AI companion trained on a patient's pre-injury voice patterns, expressions, and communication history. It assists in rehabilitation sessions, helping clinicians and families understand what the patient cannot yet articulate. Rather than acting autonomously, it reads continuous patient cues, including gaze, facial expression, and muscle movement, seeking confirmation before acting. The patient remains in control at every step.

To introduce a technology like this responsibly, designers need to understand how the public actually perceives AI companions: where trust exists, where it breaks down, and what language and framing makes the difference. This project used large-scale social network analysis of 4,343 Reddit posts across a decade to answer exactly that, translating those findings into concrete design principles for the DTA.

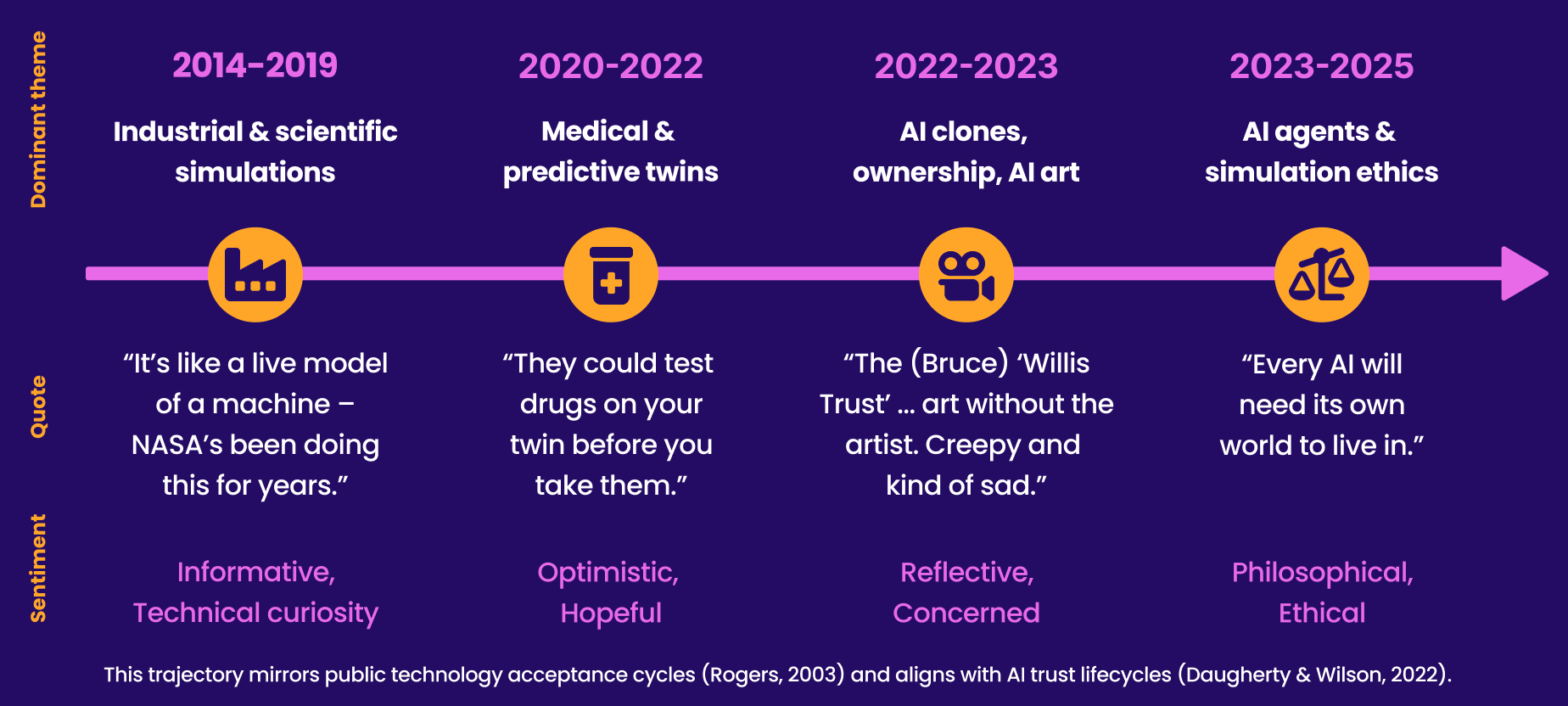

A decade of public conversation about digital twins. Discourse shifted from technical curiosity (2014) through biomedical optimism, to identity and ownership concerns, to simulation ethics, revealing where trust is built and where it fractures.

Constraints

The DTA is a speculative concept with no existing product to evaluate or test against

Data was limited to publicly available Reddit posts, a platform skewed toward technologically literate users, which is acknowledged as a limitation

Ethical data handling required full anonymisation of user identities throughout the analysis

The research had to translate meaningfully into actionable design principles, not remain at the level of academic observation

The research foundation

PART 1

Why public sentiment matters for AI design

Designers introducing novel AI technologies into sensitive contexts like healthcare, rehabilitation, and aged care cannot rely on standard usability research alone. There is no existing product to test, no users who have experienced it, and no established baseline of expectation. What exists is public conversation: how people talk about related technologies, what language generates trust, and what framing generates fear.

This project treated that conversation as a design resource.

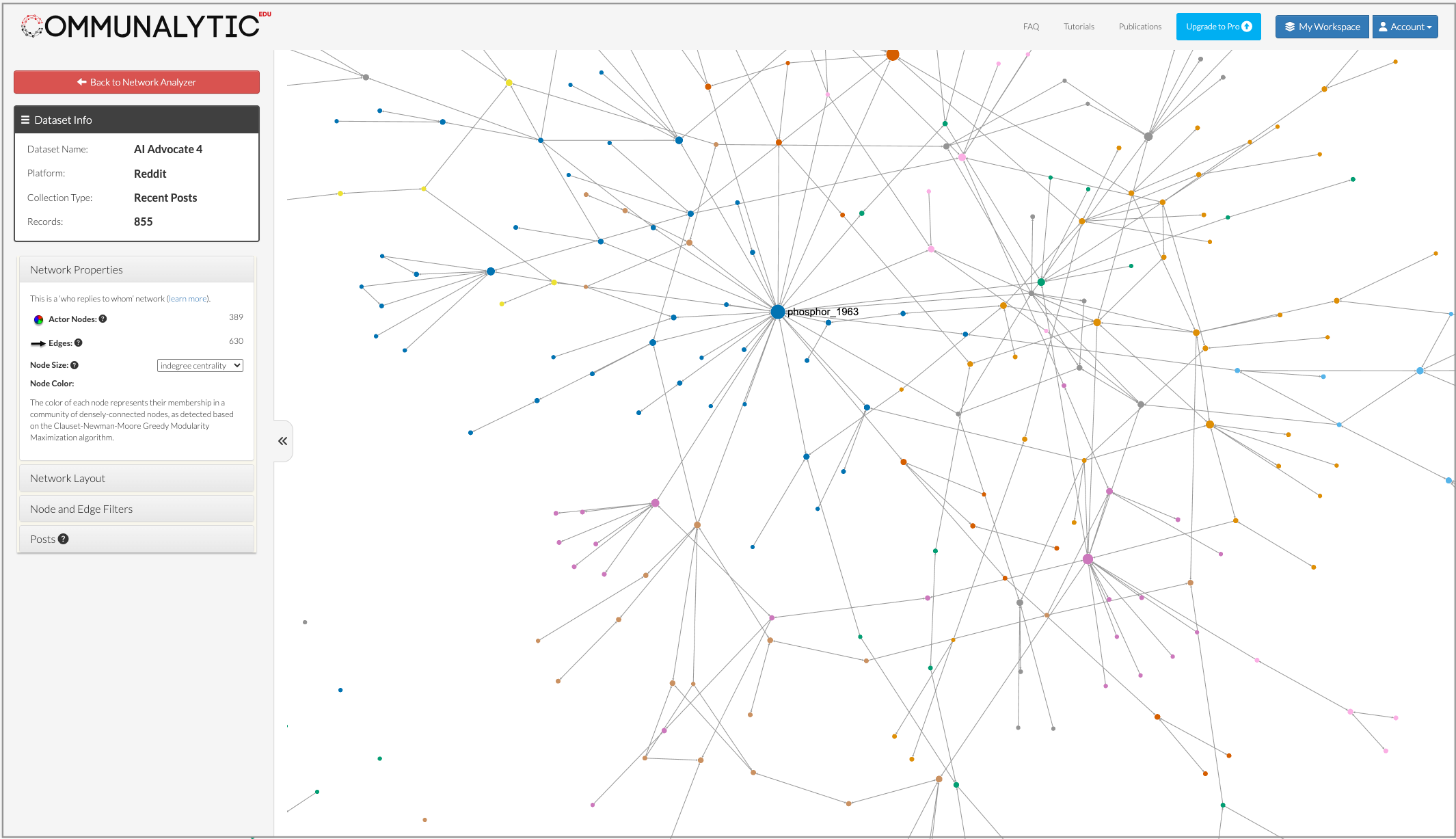

Using Communalytic, a research-grade social network analysis platform, I collected and analysed 4,343 Reddit posts across five subreddits: r/TBI (2,571 posts), r/AssistiveTechnology (868), r/technology (555), r/artificialIntelligence (192), and r/artificial (149), spanning 2014 to 2025. Posts were coded thematically using AI-assisted clustering followed by manual validation, producing six primary themes

The framing language finding

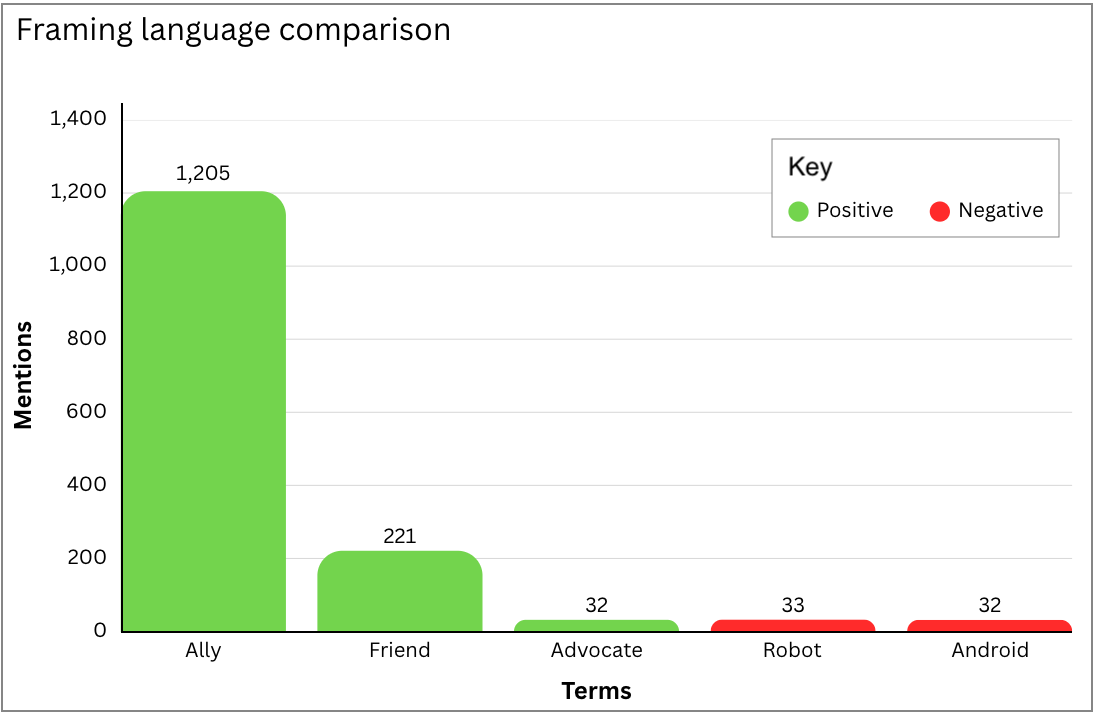

The most actionable finding from the sentiment analysis was the relationship between language choice and trust. Positive relational terms, including "ally" (1,205 mentions) and "friend" (221), dominated the dataset and consistently correlated with positive sentiment. Technical labels such as "robot" (33) and "android" (32) correlated with caution, scepticism, or discomfort.

Framing language and public trust. "Ally" outperformed "robot" by more than 36 to one. People trust technology described in relational terms and resist technology described in mechanical ones.

The implication for the DTA is direct: naming and communication strategy is not a branding exercise. It is a design decision with real consequences for whether patients and families engage with the technology at all.

Social network structure of the AI Advocate dataset. 855 records, 389 actor nodes, 630 edges. Node size reflects indegree centrality, indicating how often a user's posts attracted responses and their influence within the conversation.

What the data showed

Thematic analysis

A clear ranked distribution was produced. Robot vs Ally Framing was the dominant theme at 1,352 mentions, followed by Healthcare Use (1,135), Empathy and Companionship (765), Identity and Ethics (527), and Control and Autonomy (323). The pattern was consistent: people engaging with assistive AI in healthcare contexts are not primarily concerned with capability. They are concerned with relationship, recognition, and control.

Sentiment analysis

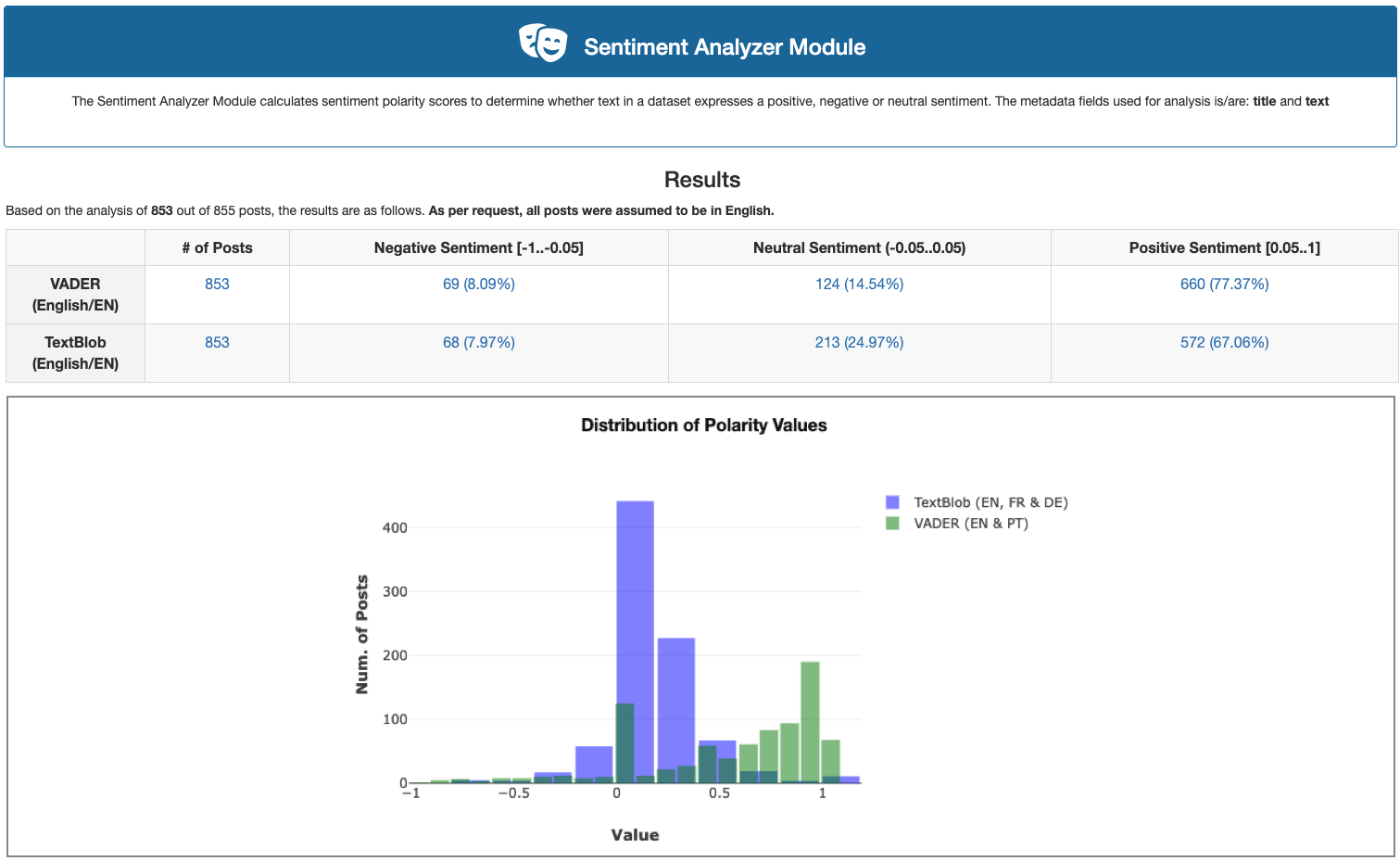

Using two independent algorithms, VADER and TextBlob, produced strong agreement. Across 853 posts, 77% registered positive sentiment on the VADER scale, with both algorithms agreeing on categorisation for 71.6% of posts (Cohen's kappa = 0.346, a fair agreement level). The r/AssistiveTechnology community showed the strongest positive engagement, concentrated around discussions of empathy, communication tools, and identity continuity.

Sentiment analysis results, AI Advocate dataset. 77.37% positive sentiment on VADER across 853 posts. TextBlob confirmed 67.06% positive. Both algorithms agreed on 509 posts with positive polarity, the largest agreement cluster.

Toxicity analysis

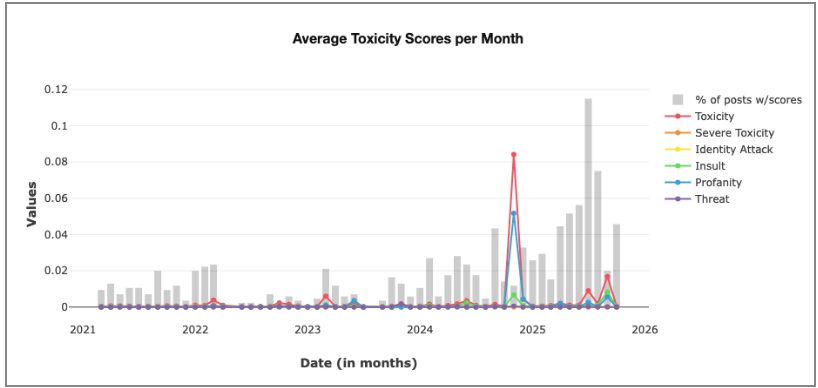

This graph showed that this was an unusually civil discourse space. Scores across toxicity, severe toxicity, identity attack, insult, profanity, and threat remained close to zero throughout the dataset, with only a small spike in late 2024 as AI conversation generally became more contested. When technology is framed in a care context, the conversation stays humane.

Average toxicity scores per month, 2021 to 2025. Scores remained near zero across all categories throughout the dataset. The rehabilitation and assistive technology context consistently produced more civil engagement than general AI discourse.

Insight

"Ally" and "friend" correlate with strongly positive sentiment. "Robot" and "clone" generate fear and resistance. The DTA's name, framing, and every piece of onboarding language should be designed around this finding.

Translating research into design principles

PART 2

Three stakeholders, competing needs

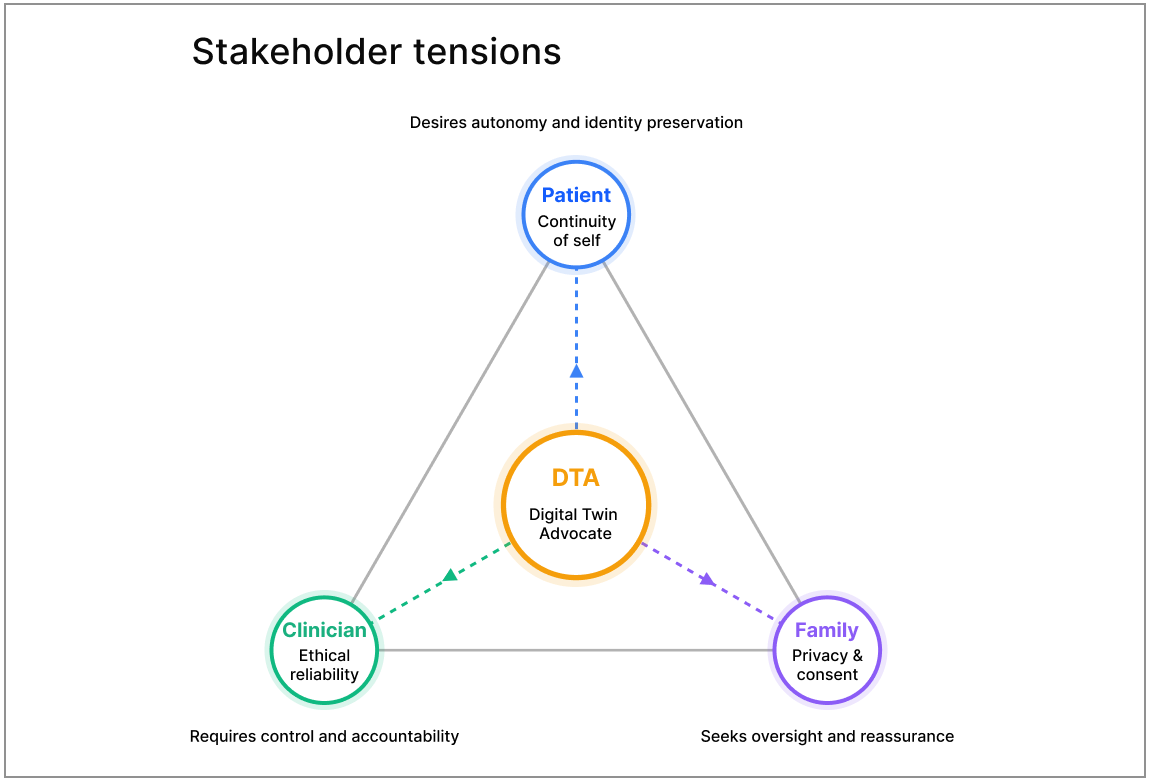

One of the most important findings from the thematic analysis was that the rehabilitation context involves not one user but three, and their needs are not always aligned.

The patient wants continuity of self: the DTA should feel like an extension of who they are, not a replacement or an interpreter. The family wants privacy, consent, and reassurance that their loved one is not being automated. The clinician wants ethical reliability and clinical accountability.

These are not the same goal. In many moments they are in direct tension. The DTA cannot be designed to satisfy all three simultaneously. It must be designed to mediate between them, keeping the patient at the centre of every decision while remaining trustworthy to the people around them.

Navigating stakeholder tensions. The DTA operates at the intersection of competing needs, mediating between stakeholder demands rather than satisfying a shared goal. Patient, family, and clinician each require something different, and those requirements sometimes conflict.

The interaction model

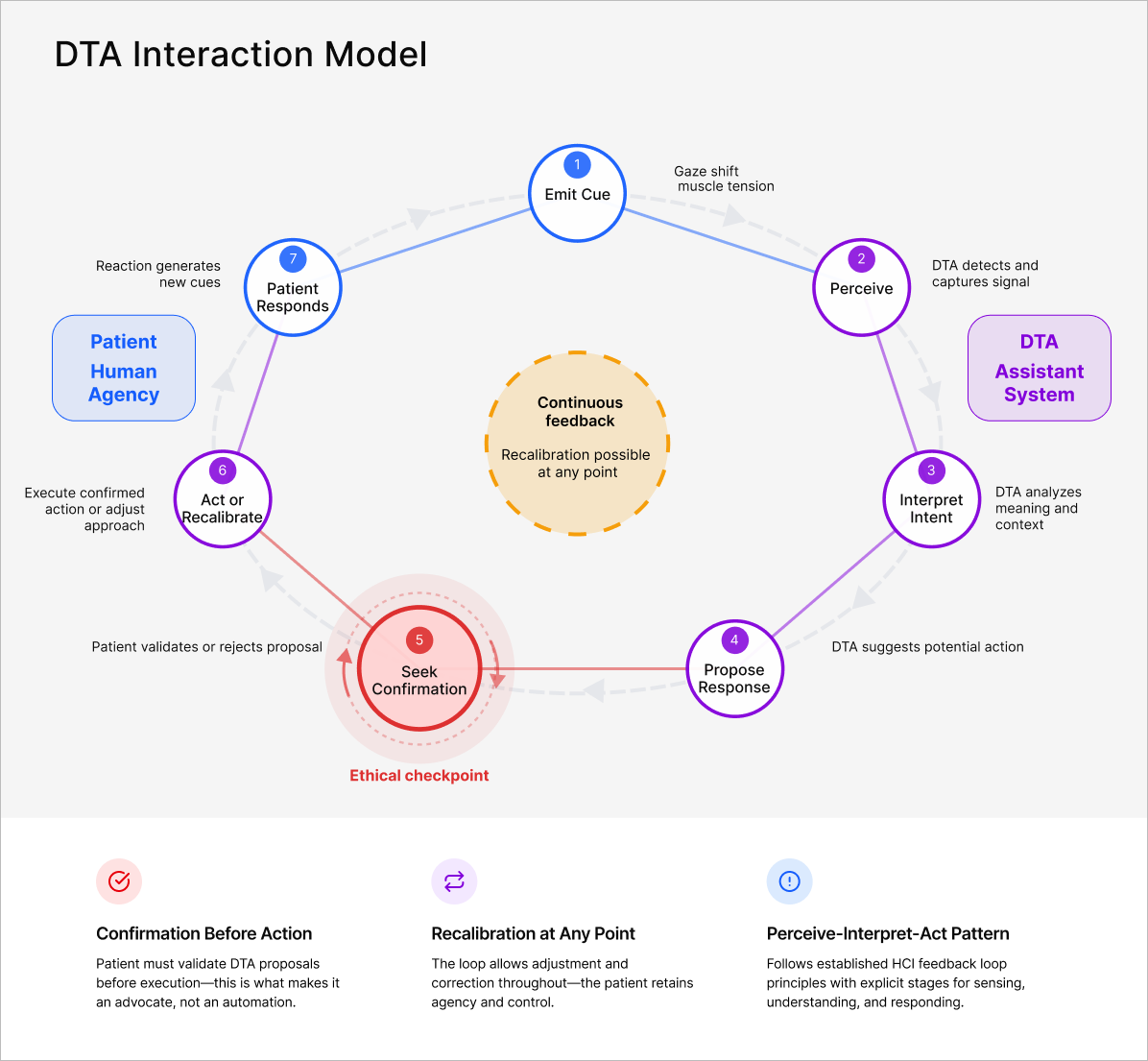

The core design challenge for the DTA is not what it does, but how it asks permission before doing it. An AI system that acts without confirmation is an automation. An AI system that seeks confirmation before acting, and recalibrates when that confirmation is withheld, is an advocate.

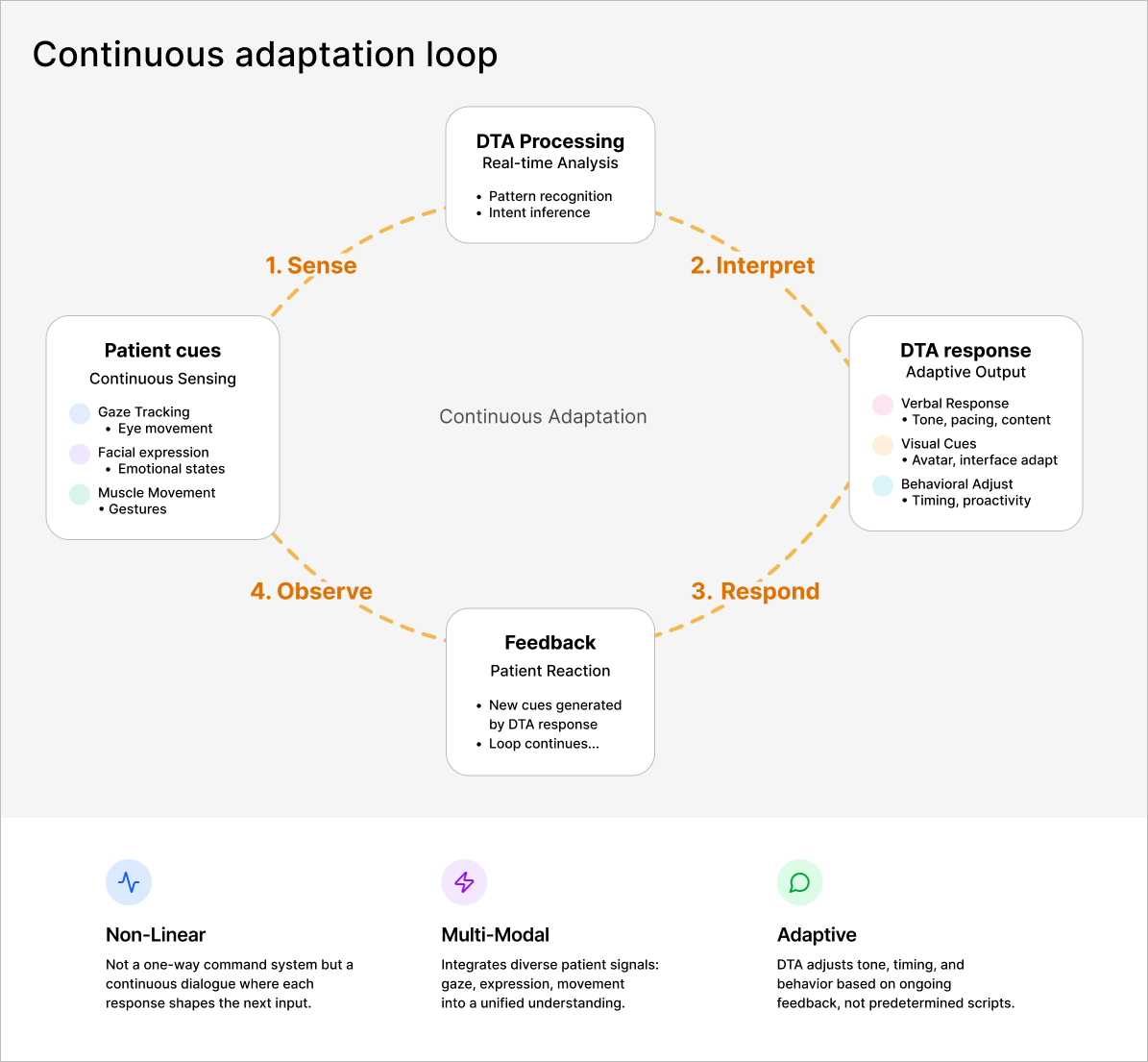

The DTA interaction model is a perceive-interpret-act feedback loop with confirmation as the mandatory ethical checkpoint. The patient emits a cue: a gaze shift, a muscle tension, an attempted expression. The DTA senses the signal, interprets intent and context, proposes a response, seeks confirmation from the patient, and only then acts. If the patient withholds confirmation, the DTA recalibrates. The loop is continuous and the patient can redirect it at any point.

DTA interaction model: a perceive-interpret-act feedback loop. Confirmation before action is the ethical checkpoint that distinguishes the DTA as an advocate rather than an automation. Recalibration is possible at every stage.

Continuous adaptation across patient cues and DTA response. Patient cues feed into real-time analysis. DTA responses adapt continuously based on patient feedback.

Decision

Confirmation before action is not a usability feature. It is the ethical foundation of the entire system. Without it, the DTA becomes a system that speaks for the patient rather than with them, which the research shows is exactly what people fear most about AI companions in care settings.

Design recommendations

PART 3

The research produced seven concrete recommendations, each grounded in the sentiment and thematic data.

Language and branding

Frame the DTA as a compassionate ally, not a machine. Language like "your voice, remembered" centres the patient and aligns with the framing terms the data shows build trust. The name of the system, the words used in onboarding, and the tone of every interaction are all design decisions.

Consent and transparency

Provide an interactive dashboard showing what patient data is used, where it came from, and how to change permissions at any time. Transparency about data use is interpreted as emotional safety, not just compliance.

Human-in-the-loop design

The DTA should visually confirm patient cues before acting: "I think you meant yes. Is that right?" This keeps the patient at the centre of every interaction and prevents the system from sliding into automation.

Emotional feedback

Train the DTA to adjust tone, pacing, and facial expressions based on patient fatigue or discomfort. The research found that AI described as responsive to emotional state correlates with significantly higher trust.

Family engagement

Involve families in the onboarding process to build shared understanding of how advocacy works and what the DTA can and cannot do. The moment a family member fears their loved one is being replaced is predictable. It is also designable.

Governance and oversight

An ethics committee reviewing data management and transparency quarterly ensures accountability. Visible human oversight was consistently cited in the research as a condition of trust, not an optional addition.

Communication strategy

Tell recovery stories focused on regained agency, not technical capability. The emotional payoff is not that the DTA works. It is that the patient is heard again.

Outcome

By listening to how a decade of public conversation framed AI companions, this project produced design principles grounded in what people actually need to trust this technology, not what designers assume they need.

Outcome

The DTA remains a concept. The research and design principles that underpin it are real, rigorous, and directly applicable to any organisation introducing AI-assisted communication tools in healthcare or rehabilitation contexts.

Large-scale social network analysis is not a standard design research tool. For speculative AI products, where there are no existing users to interview and no deployed product to evaluate, it is precisely suited. This project demonstrates how public discourse can act as both a diagnostic tool and a design guide: surfacing what people fear, what language builds trust, and what principles need to be in place before a technology like this can be introduced responsibly.

Julie’s story

I wrote a short story to go with this submission, as a way to capture the human moment the design is trying to protect:

"For the first time, I am not being discussed. I am part of the conversation again. I am speaking."

That is the design goal. Everything else, the interaction model, the confirmation checkpoint, and the language strategy, exists to make that moment possible.

Academic context

Unit: ADM6013 Analysing the Web and Social Networks, Victoria University Qualification: Graduate Certificate in Digital Learning and Teaching

Assessment results

Assignment 1 (Data Collection Case Study): 100/100, High Distinction

Assignment 2 (Digital Twin Presentation): 97.5/100, High Distinction

Assignment 3 (Digital Twin Report): 91/100, High Distinction

Selected lecturer feedback

"You've not only addressed every assessment criterion, you've taken us on a journey that surfaces new possibilities and even new destinations. The standout highlight was the timeline of discourse. That level of contextual grounding shows both passion and genuine insight."

Natasha Dwyer - Associate Professor, Victoria University

"Your analysis demonstrates an impressive balance of technical understanding, ethical reflection, and emotional intelligence. The synthesis of sentiment data with human-centred design principles is particularly strong. Your framing of the Digital Twin Advocate as a compassionate intermediary rather than an automation is powerful and original. This is future-facing, responsible design work that shows both imagination and rigour."

Nick Petch - Guest Lecturer, Victoria University

Impact and evidence

PART 3

Platform-level impact

The governed template was piloted across 50 units in the first wave, tested across all campuses before institutional commitment. It then rolled out across approximately 10,000 active courses, reaching around 86,000 students across all Monash faculties.

That scale was only possible because the system was built for it. A template that required faculty-by-faculty negotiation or custom rebuilding could not have moved at that speed or that volume. Governance was the precondition for reach.