Preparing aged-care facilities for assessor-led funding under AN-ACC

Telstra Health’s Clinical Manager is a core operational system used daily by residential aged-care providers to manage clinical documentation, administration, and compliance. It sits at the centre of how care is recorded, evidence is assembled, and regulatory obligations are met.

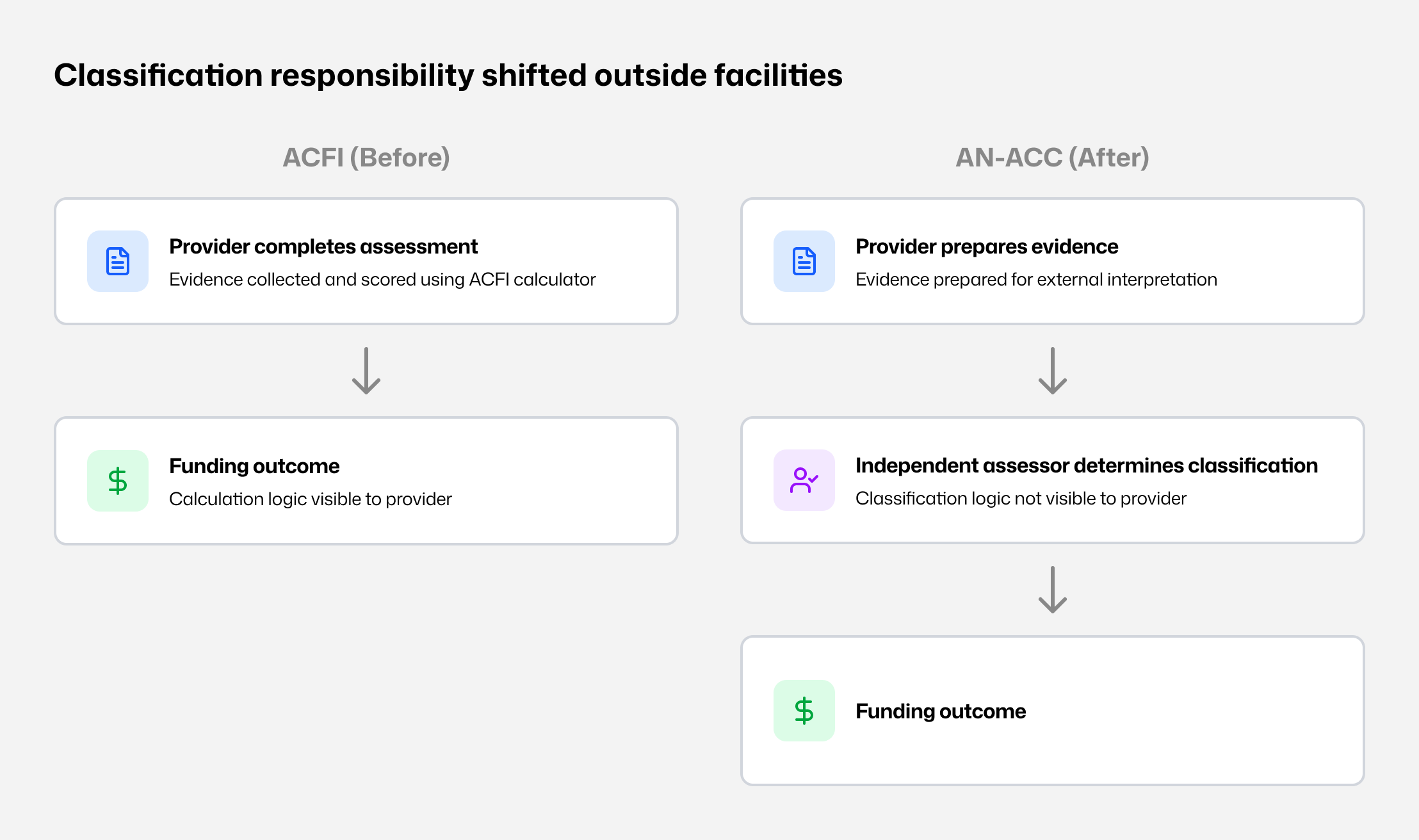

Following the Royal Commission into Aged Care Quality and Safety, funding reform replaced ACFI with AN-ACC and changed how resident classifications are determined. Providers no longer determine funding outcomes but remain responsible for the quality and completeness of evidence reviewed by independent assessors. This work updated Clinical Manager to support assessor-aligned preparation workflows within that shift.

Clinical Manager operates across a national footprint of approximately 60,000 residential aged-care beds, situating this system update within a large-scale AN-ACC funding and compliance environment.

At a glance

System shift: System shift: From provider-led funding calculation to assessor-aligned preparation

Capability: Evidence traceability organised by national assessment structure

Scope: Assessment preparation embedded within a live, legacy care platform

Operating environment

Context

Organisation: Telstra Health (Aged & Disability)

Environment: Residential aged-care software platform (Clinical Manager)

Users: Clerical and clinical staff preparing residents for assessment

Role: Senior UX Designer

Timeframe: 6-month delivery window

Constraints

Government-defined funding and assessment model

Classification authority external to providers

Long-established platform with embedded legacy workflows

High audit and compliance exposure

Assessment authority shifted outside facilities

PART 1

Policy context

The Royal Commission identified systemic issues in aged-care funding, including inconsistent classification practices and incentives misaligned with resident need. In response, the Australian Government replaced ACFI with AN-ACC to standardise assessment nationally and remove funding determination from providers.

This was not a product change.

It was a structural shift in responsibility and accountability.

The ACFI operating model

Under ACFI:

Assessments were completed internally.

Evidence was collected, scored, and reviewed in-house.

Funding outcomes could be estimated using a calculator.

Scoring logic was visible to providers.

This transparency shaped behaviour. Because outcomes could be anticipated, preparation practices aligned to known scoring mechanics. During the Royal Commission, this dynamic was cited as a risk to equity and consistency, including optimisation around residents aligned to higher funding bands.

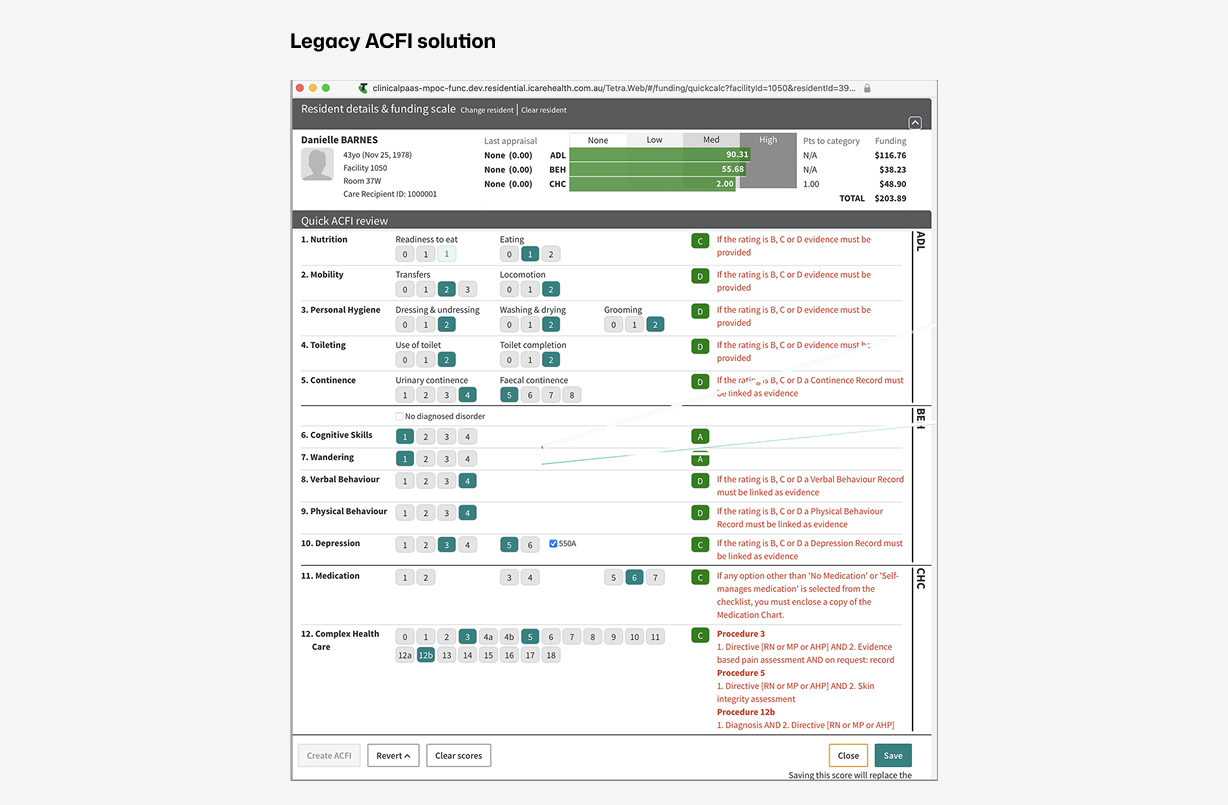

Clinical Manager reflected this model through calculator-led workflows and score-driven interpretation.

What AN-ACC changed

AN-ACC shifted assessment responsibility away from providers.

Under the new model:

Independent assessors apply a national assessment tool.

Classifications are no longer determined internally.

Funding depends on assessor interpretation of submitted evidence.

Classification logic is not visible to providers.

Internal systems shifted from assessment to preparation.

Early interviews with service managers highlighted concern about funding risk, assessor interpretation, and whether existing documentation would be sufficient under external review.

Classification shift - Assessment moved from provider-led scoring to external classification.

Loss of calculation visibility

At rollout, the logic used by assessors to determine classifications was not available to providers. Preparation quality now depended on whether assessors could:

locate relevant evidence,

understand its context, and

interpret resident need accurately.

This increased the importance of structured, complete, and easily retrievable evidence.

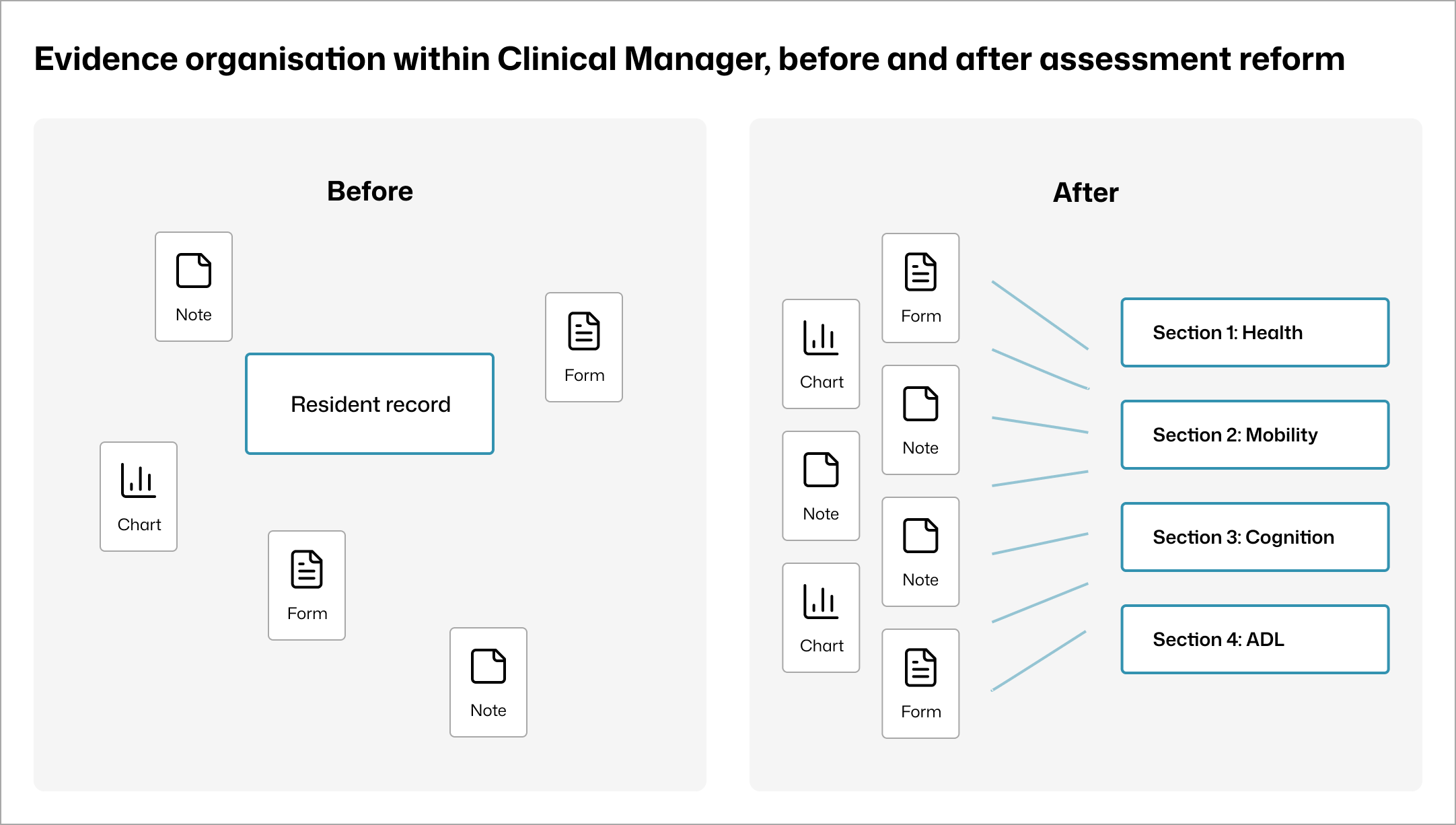

Resulting system mismatch

Within Clinical Manager:

Existing workflows reinforced a scoring mindset that no longer applied.

Evidence existed across notes, charts, and forms but was not organised by assessment section.

Calculator patterns implied predictability and control that providers no longer had.

The primary risk was not usability.

It was preparing staff for a process that no longer existed.

Evidence storage before and after -Documentation existed but was not organised by AN-ACC sections.

Insight

Staff were not seeking to influence outcomes. They wanted clarity on how to prepare evidence that would withstand external review.

Shaping a preparation system aligned to assessor interpretation

PART 2

System intent

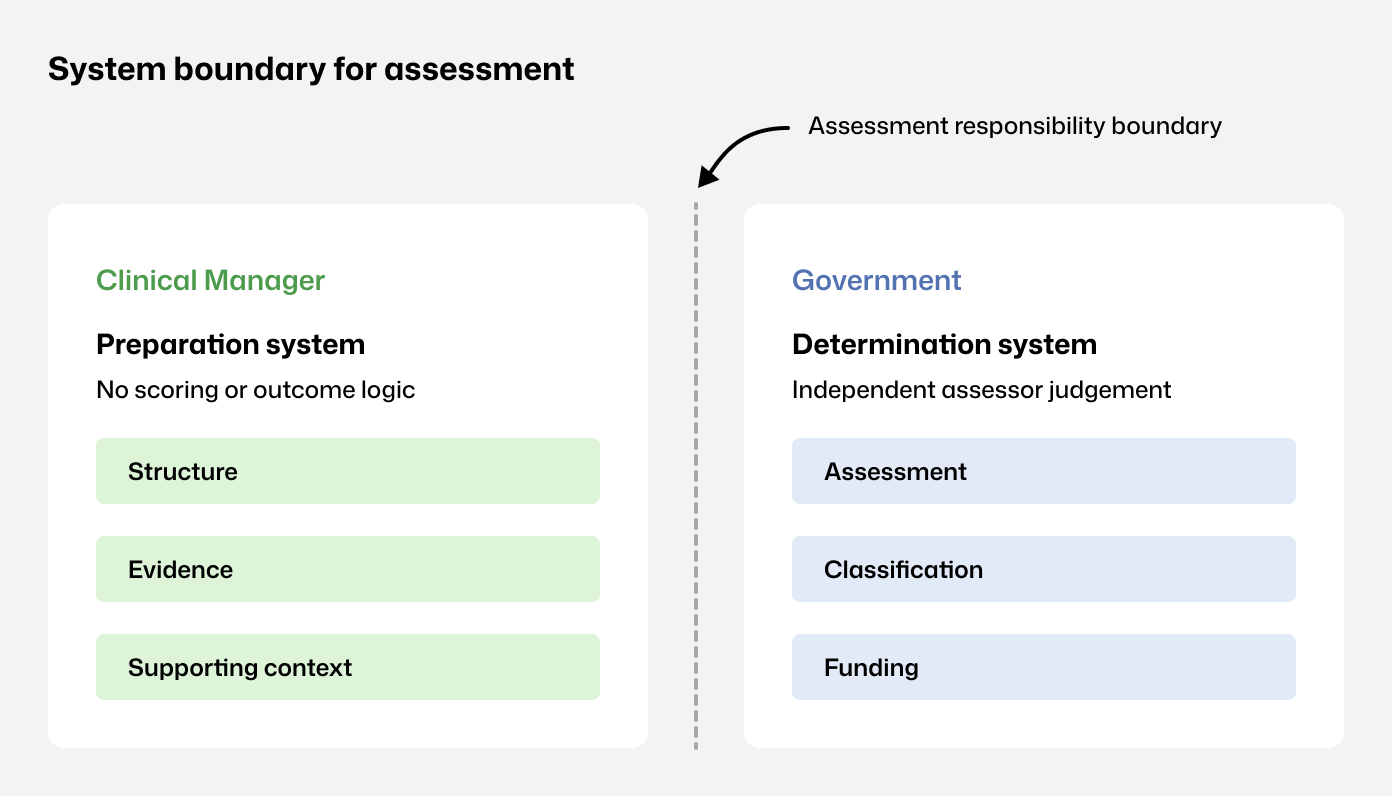

Before interface decisions, the system boundary was made explicit.

The system would:

support preparation for assessment,

reflect the AN-ACC assessment structure,

surface evidence in assessment context.

The system would not:

determine classifications,

predict funding outcomes,

reproduce ACFI optimisation patterns.

Assessment boundaries - Clinical Manager supports preparation; assessment and funding sit externally.

Structural decisions

Decision: Avoid optimisation patterns that imply control over classification.

ACFI calculator -Calculator-led workflows reinforced internal scoring.

Decision

Do not reproduce funding calculators or prediction logic inside Clinical Manager.

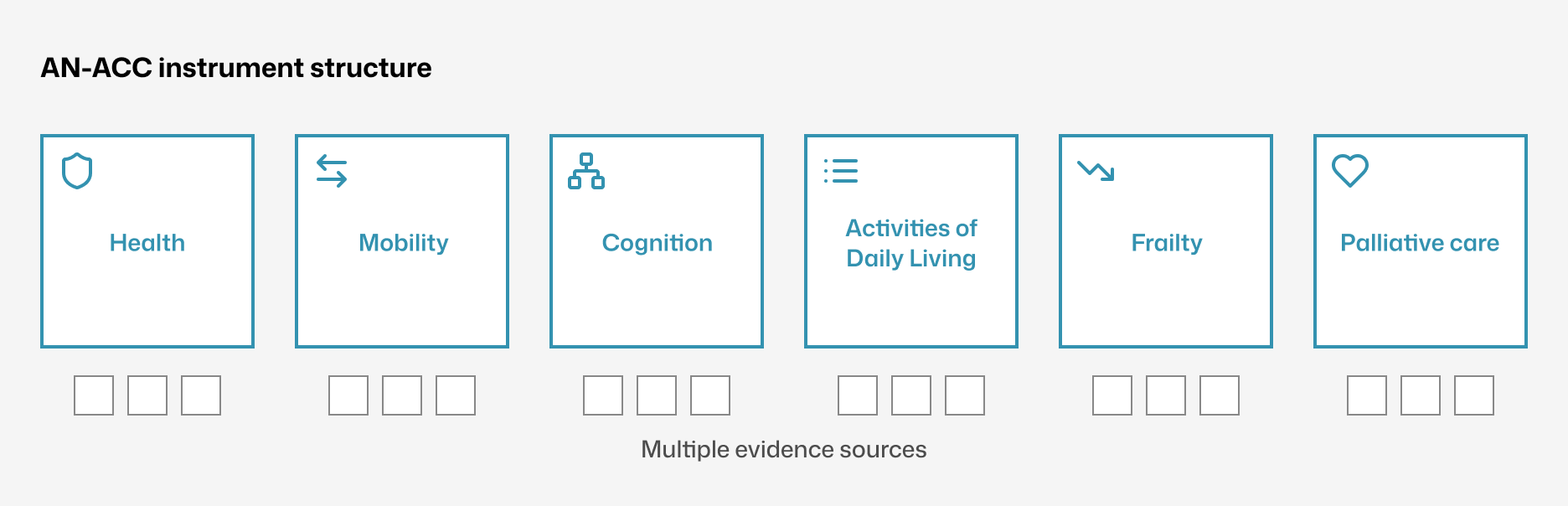

Decision: Use AN-ACC instruments as the primary information architecture.

Assessment navigation mirrored the national instrument structure, creating a shared definition of completeness and reducing missed sections.

Structure to follow AN-ACC instrument - Navigation mirrors the AN-ACC structure.

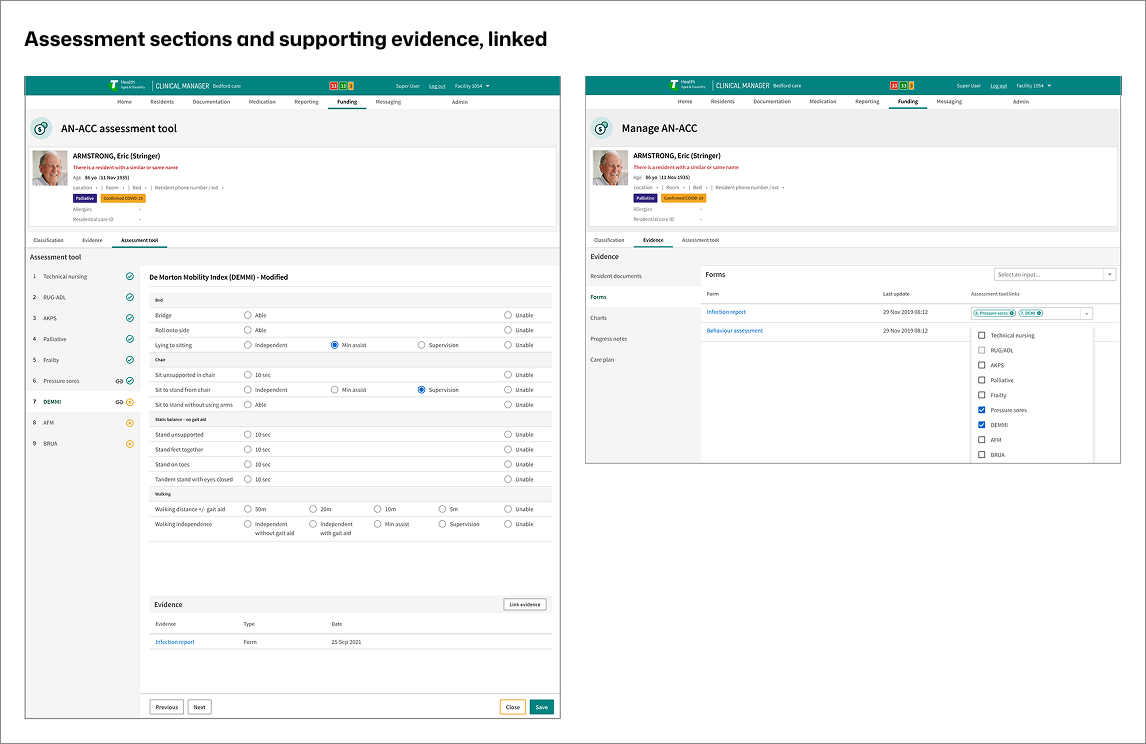

Decision: Link evidence at the assessment-section level.

Evidence remained part of the resident record while becoming explicitly linkable to assessment sections, enabling traceability without duplication.

Direct link to supporting evidence -Evidence is linked to assessment sections without duplication.

User experience prioritisation

Predictability governed the UX:

stable navigation by assessment section,

consistent layouts across instruments,

visible evidence status in context,

linear progression over branching.

This reduced decision load while staff continued routine care.

What was rejected

Facility-side scoring or funding prediction tools

Free-form evidence repositories detached from assessment structure

Parallel funding packs outside the resident record

Global evidence visibility without role-based controls

Each weakened traceability or implied outcome control, conflicting with AN-ACC’s separation of preparation and determination.

Capability created and supporting evidence

PART 3

Capability created

Assessor-aligned preparation structure inside Clinical Manager

Clear separation between preparation and external determination

Evidence

Structural impact:

One preparation flow replaced four to five disconnected screens

Assessment sections and linked evidence presented together

Increased staff confidence during readiness activities

Measured outcomes:

Evidence lookup time reduced by~70% (6–10 min to under 2 min)

Reduced follow-up clarification after preparation

What was not proven

Classification accuracy

Funding outcomes

Long-term documentation behaviour change

AN-ACC funding portal within Clinical Manager - Interface showing how evidence is organised by assessment section within the live Clinical Manager environment.

Outcome

Facilities regained control over preparation quality without implying control over classification outcomes.

Reflection

AN-ACC reframed funding preparation as an evidence and interpretation problem.

By aligning system structure to the assessor lens and making evidence explicit within the resident record, Clinical Manager implemented a preparation model capable of supporting reform-driven change without reworking underlying care workflows.